The Green Machine

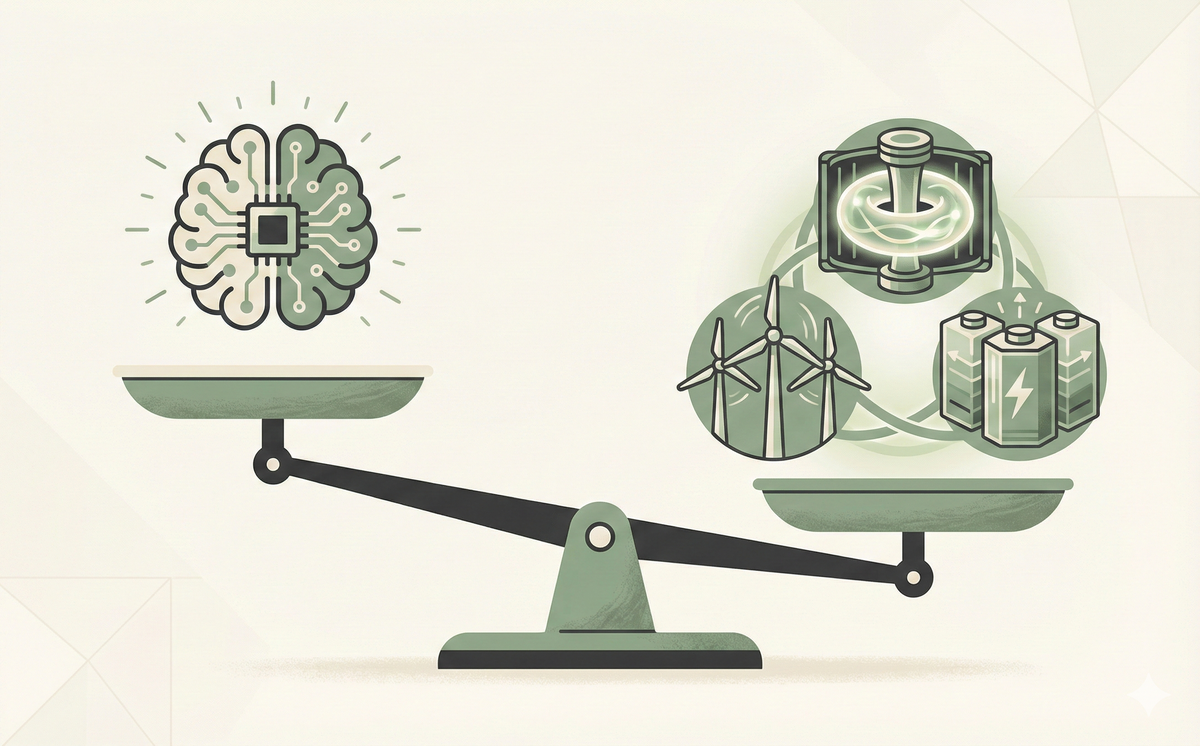

Is AI killing the planet or saving it? We look past the doom headlines to see how AI is optimizing our energy grid, finding new battery materials, and why it might be our best bet for a greener future.

The Green Machine: Is AI Key to a Sustainable Future?

Headlines love a good villain. And right now, the villain is the data center.

You've probably heard the stats: "Training one AI model uses as much energy as 100 homes do in a year" or "Generating an image charges your phone."

These concerns are 100% valid. The current generation of Large Language Models (LLMs) are thirsty. They require mass amounts of electricity and water. If we just kept building bigger models without changing anything else, we would fail our climate goals.

But that is only half the story.

If you look at the Net Impact—the energy AI uses versus the energy AI saves—the picture changes. AI isn't just a power drain; it is likely the most powerful tool we have ever built to solve the climate crisis.

Here is why AI might actually be the "Green Machine" we've been waiting for.

The Cost: Understanding the Invoice

Let's not hide the bill. AI is expensive to run.

Every time you ask ChatGPT a question, a server farm somewhere has to spin up billions of calculations.

- A standard Google Search costs almost nothing in energy.

- An AI Chat Query can cost 10x to 30x more.

When you scale that to billions of people, the energy demand spikes. Microsoft and Google have both seen their emissions rise recently because they are building more data centers. This is the "Jevons Paradox": as technology becomes more efficient and useful, we use more of it, protecting the savings.

But focusing only on the bill ignores the investment. We aren't burning energy for fun; we are spending it to buy solutions.

The Investment: How It Actually Works

We use AI to optimize complex systems that humans are too slow to manage.

1. Optimizing the Grid (The Air Traffic Controller)

The Problem: Renewable energy (Wind and Solar) is unpredictable. The wind doesn't always blow. To keep the lights on, grid operators keep backup coal plants running "just in case." It's inefficient, like keeping a second car running in your driveway.

The Solution: DeepMind used AI to predict wind patterns 36 hours in advance.

The Analogy: Think of the power grid like Air Traffic Control. Without AI, the controllers (humans) have to leave massive gaps between "planes" (energy packets) because they aren't sure when the next one is coming. They play it safe.

AI is a controller that can see the future. It knows exactly when the wind will blow, allowing it to pack the "planes" tighter together. This efficiency boosted the value of Google's wind energy by 20% without building a single new turbine.

2. The Battery Breakdown (The Digital Chef)

The Problem: To ditch fossil fuels, we need better batteries. We need materials that hold more charge and use fewer rare earth metals (like lithium). Finding these materials is slow.

The Solution: Microsoft used AI to simulate 32 million potential materials.

The Analogy: Imagine you are trying to invent a new cake recipe, but you have to bake and taste every single one. It would take a lifetime.

AI acts like a Digital Chef. It can "taste" millions of recipes virtually in seconds. It narrows down the list from 32 million to just the 18 tastiest ones. Scientists then go into the lab and only bake those 18.

In 80 hours, AI found a material that uses 70% less lithium. That discovery would have taken human chemists years of trial and error.

3. Efficiency in the Cloud

Even the data centers themselves are getting smarter. Google used AI to control the cooling fans and windows in its server farms, reducing cooling energy by 40%. It's like having a smart thermostat that adjusts every second to save you money, but on an industrial scale.

The Counterpoint: The "Energy Debt"

We have to be honest: We are currently in debt.

We are spending massive amounts of Carbon now to train models that might save Carbon later.

- The Risk: If AI development stalls, or if we use it mostly to generate memes instead of fusion reactors, we just burned a lot of coal for nothing.

- The Reality: "Efficiency" often leads to more consumption. If AI makes flying planes cheaper (by optimizing fuel), people might just fly more.

This is why "Net Positive" is a goal, not a guarantee. It requires us to actively point these tools at the right problems.

What Can You Do? (The "Small Model" Rule)

While the giants solve the big problems, you can help by being a "Smart User."

Not every question needs a genius.

- Don't take a Ferrari to the grocery store. You don't need GPT-5 or Claude 4.5 Opus to write a "Thank You" email. Use faster, smaller models (like GPT-4o-mini or Gemini 3 Flash). They use a fraction of the energy.

- Be specific. Better prompts mean fewer retries. If you have to ask the AI to rewrite something 10 times, that's 10x the energy. See our guide on The Intent Gap to get it right the first time.

The Bottom Line

Is AI a challenge for the grid? Yes. Is it a disaster? No.

It is a tool. And like any powerful tool, it requires responsibility. But if we use it right, AI won't just be the thing that consumes our power—it will be the thing that gives us the power to save the world.

Don't just read about it. Try it.

You understand the concept. Now see how it works in the real world with this step-by-step guide.

Protect Your DataWant to keep learning?

Get our free AI Starter Kit — 5 lessons delivered to your inbox.

Join readers learning AI in plain English. No spam, ever.